Frigate is brilliant at what it does. Object detection, recording, notifications, the whole NVR side of things works beautifully. But the live view in the default web UI uses jsmpeg, and jsmpeg is rough. High latency, noticeable CPU load in the browser, and the image quality is whatever jsmpeg feels like giving you that day.

The good news is that Frigate already ships with go2rtc built in, and go2rtc can serve the same camera streams over MSE (Media Source Extensions) and WebRTC. Both of those are real, modern, browser-native video standards. The live view goes from "kind of works" to "basically instant", and your CPU stops crying.

Here is how I set it up.

the problem, in one screenshot's worth of words

Default Frigate live view on my setup:

- 2 to 4 seconds of latency

- visible compression artifacts

- browser tab pinning a CPU core at 30 to 40 percent

- Chrome occasionally just giving up and showing a black rectangle

Not unusable, but not something I wanted to leave running on a dashboard.

what go2rtc gives you

go2rtc is a tiny streaming server that can ingest pretty much any camera protocol (RTSP, RTMP, ONVIF, HomeKit, you name it) and re-stream it to pretty much anything else (WebRTC, MSE, HLS, MJPEG). Frigate bundles it, so you do not need to install anything separately. You just need to configure it.

The key idea: instead of pointing Frigate directly at your camera's RTSP feed, you point go2rtc at the camera, and then point Frigate at go2rtc. One extra hop, but now go2rtc has the stream and can hand it to the web UI over a proper protocol.

config

In your Frigate config.yml, add a go2rtc section at the top level, before the cameras section:

go2rtc:

streams:

camera:

- rtsp://user:[email protected]:554/stream1

- "ffmpeg:front_door#audio=opus"

The first entry in each stream is the actual RTSP source. The second entry is a go2rtc trick: it tells go2rtc to take the stream it just defined (front_door) and re-encode the audio to Opus. WebRTC in browsers wants Opus audio, and most cheap cameras give you something weird like PCMA or AAC, so this transcode is usually necessary if you want sound.

If you do not care about audio, you can drop the second line entirely.

Then, in the cameras section, change your existing camera definitions to pull from go2rtc instead of directly from RTSP:

cameras:

front_door:

ffmpeg:

inputs:

- path: rtsp://127.0.0.1:8554/camera

input_args: preset-rtsp-restream

roles:

- record

- detect

live:

stream_name: front_door

A few things happening here:

127.0.0.1:8554is go2rtc's internal RTSP port, running inside the same container as Frigate.preset-rtsp-restreamis a Frigate preset that sets up the right ffmpeg args for pulling from go2rtc.live.stream_name: cameractells the Frigate web UI which go2rtc stream to use for the live view.

Restart Frigate, and the live view should now use MSE by default, with a WebRTC option if your browser supports it. Both will be noticeably faster than jsmpeg.

MSE vs WebRTC

MSE is slightly higher latency (maybe half a second to a second) but rock solid. Works in every modern browser, handles network hiccups gracefully, and does not care much about your NAT setup. This is what you want for the default dashboard view.

WebRTC is near-zero latency, usually under 200ms, which is the closest you can get to "looking through the camera in real time". The tradeoff is that WebRTC needs to negotiate a peer-to-peer connection, which can get fiddly if your Frigate is behind reverse proxies or weird NAT setups. Inside a LAN it just works. Through Traefik with WebSocket support configured, it works. Through a VPN, it works. I only had trouble when I briefly tried to expose it publicly, at which point I decided I did not need to expose it publicly.

Frigate's UI lets you pick per-camera, and it will fall back automatically if one does not work.

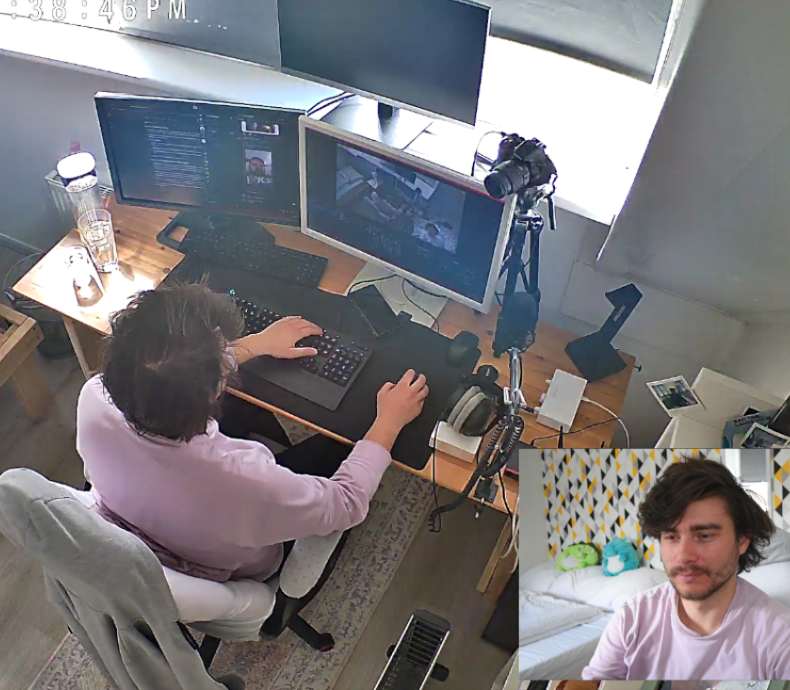

OBS trick

Here is the part I actually did this for. I wanted the driveway camera pinned to a corner of my desktop, always visible, without a whole browser tab dedicated to it. OBS Studio has a Browser Source, and go2rtc serves a standalone stream page, so:

- In OBS, add a new source, pick "Browser".

- For the URL, use:

http://frigate.hexie.dev:1984/stream.html?src=camera&mode=webrtc

(Replace with your own Frigate hostname and camera name. Port 1984 is the default Frigate web UI port.) - Set the width and height to match the camera's resolution, or whatever you want to display.

- Check "Shutdown source when not visible" to save CPU when the scene is inactive.

- Check "Refresh browser when scene becomes active" so it reconnects cleanly.

That is it. You now have a live camera feed as a proper OBS source, which means you can composite it with anything else, route it through a virtual camera, pipe it into a dashboard, whatever. On my setup the latency is barely perceptible and the CPU cost is a rounding error.

If you want MSE instead of WebRTC in the OBS Browser Source, swap webrtc for mse in the URL. MSE is the safer bet if you are going through any kind of reverse proxy that does not handle WebSockets.

gotchas

Traefik and WebSockets. If you are reverse-proxying Frigate through Traefik (which I am), WebRTC needs WebSocket upgrade support. Traefik handles this automatically for most services, but if you have a strict middleware chain, double check that websocket upgrade headers are being passed through. The symptom is MSE working fine and WebRTC silently failing.

Audio codec mismatches. If you skip the ffmpeg:camera#audio=opus line and your camera sends PCMA, the video will work but audio will be silent in WebRTC. MSE might still play it. This is confusing the first time it happens.

Camera substreams. A lot of cheap cameras have a main stream (high res, high bitrate) and a sub stream (low res, low bitrate). For the live view, use the sub stream. For recording and detection, use the main stream. You can configure both in go2rtc and reference them separately in Frigate. This alone can cut your bandwidth and CPU load dramatically.

result

Live views are now basically instant. The browser CPU load dropped to single digits. The OBS Browser Source runs my driveway camera in the corner of my main monitor all day without ever hiccuping. And I no longer flinch every time I click "Live" in the Frigate UI.

Small change, big quality of life improvement. This is most of what the homelab is, honestly: finding the one config line or architectural tweak that turns "works, technically" into "works, properly".